Power BI is a powerful business intelligence tool that allows organizations to create interactive reports and dashboards. To ensure that your data is up-to-date, Power BI allows you to schedule data refreshes using Powerbi-Dataflows. However, when working with data sources like Azure Data Lake Gen2, you may encounter issues like the “Server is Busy” error. In this blog post, we will explore the common causes of it and fixing the ‘503 Server is Busy’ Error.

The Problem

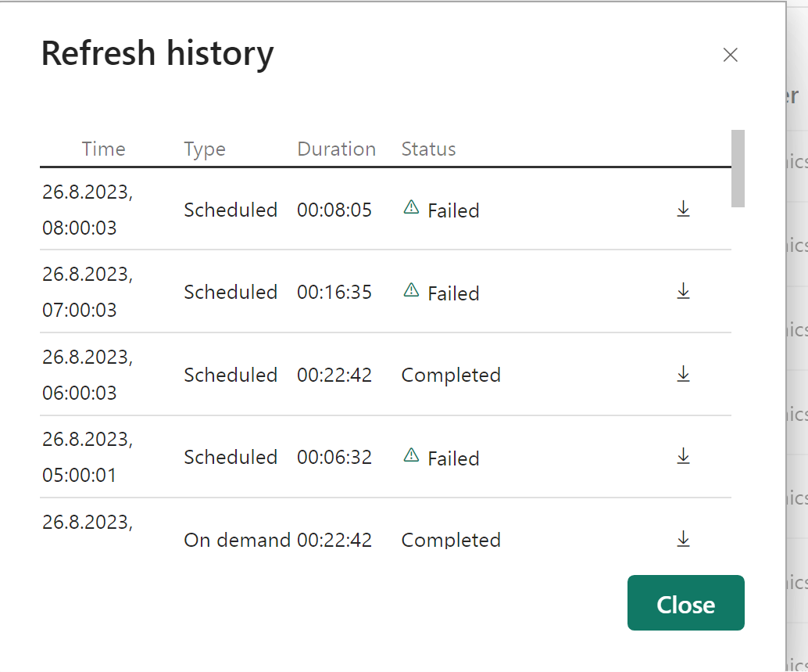

If you have like me set up a Power BI workspace with multiple Dataflows that consume data from Azure Blob storage (Azure Data Lake Gen2). You should continue reading this article. My data originally comes from Dynamics 365 CRM / Dataverse and is synchronized with Azure Synapse Link service to be stored in Azure Blob storage. Periodically, I encounted the error AzureBlobs failed to get contents from […] Status code: 503 description: ‘The server is busy.’. Request ID: […] when attempting to refresh your Dataflows. It mostly happened on sheduled refreshes for me.

Understanding the 503 Error

The key for fixing the ‘503 Server is Busy’ Error is understanding it. Power BI typically indicates that the Data Source (in this case Azure Data Lake Gen2 service) has reached its rate limits. Azure services, including Azure Data Lake Gen2, have rate limits in place to prevent excessive usage and ensure fair resource allocation.

The Rate Limits for Data Lake Gen 2 can be found in the table below.

| Resource | Target |

|---|---|

| Maximum size of single blob container | Same as maximum storage account capacity |

| Maximum number of blocks in a block blob or append blob | 50,000 blocks |

| Maximum size of a block in a block blob | 4000 MiB |

| Maximum size of a block blob | 50,000 X 4000 MiB (approximately 190.7 TiB) |

| Maximum size of a block in an append blob | 4 MiB |

| Maximum size of an append blob | 50,000 x 4 MiB (approximately 195 GiB) |

| Maximum size of a page blob | 8 TiB2 |

| Maximum number of stored access policies per blob container | 5 |

| Target request rate for a single blob | Up to 500 requests per second |

| Target throughput for a single page blob | Up to 60 MiB per second2 |

| Target throughput for a single block blob | Up to storage account ingress/egress limits1 |

As the documentation states: When your application reaches the limit of what a partition can handle for your workload, Azure Storage begins to return error code 503 (Server Busy) or error code 500 (Operation Timeout) responses. If 503 errors are occurring, consider modifying your application to use an exponential backoff policy for retries. The exponential backoff allows the load on the partition to decrease, and to ease out spikes in traffic to that partition.

To prevent the Error, you can check for the following (while 1. fixed it for me)

- Simultaneous Refreshes: When multiple Dataflows in your Power BI workspace are refreshed simultaneously, it can overload the Azure Data Lake Gen2 service, leading to rate limit violations. In this case, make sure, that every dataflow consumes the Data Source at another time. (1am, 2am, 3am… you get the point).

- High Data Volume: If your Dataflows are processing large volumes of data, they may consume more resources, pushing the service closer to its rate limits.

- Frequent Data Updates: If new data is continuously flowing into Azure Blob storage from Azure Synapse Link or other sources, it can exacerbate the rate limit issues.

Howevery, if you are not successfull witht he debugging, you might contact Azure support to inquire about increasing the rate limits for your Azure Data Lake Gen2 service if you consistently encounter rate limit errors. They may be able to adjust the limits based on your specific requirements. You will need a paied support plan for creating support tickets in azure, just keept that in mind.

Conclusion

Encountering the “Server is Busy” error in Power BI due to Azure Data Lake Gen2 rate limits is a common challenge when working with large datasets and frequent data updates. By optimizing refresh schedules, scaling resources, implementing error monitoring, and collaborating with Azure support, you can mitigate these issues and ensure your Power BI Dataflows remain reliable and up-to-date.

Remember that the key to a successful data integration strategy is a combination of smart scheduling, resource management, and proactive monitoring to address any potential bottlenecks and keep your Power BI reports running smoothly.